Biological AI as Weapon and Shield: Scientists Seek Balance

AI makes it easier to design toxins and pandemic pathogens. Scientists interviewed by Nature disagree on how to control it: some call for limiting access to sof

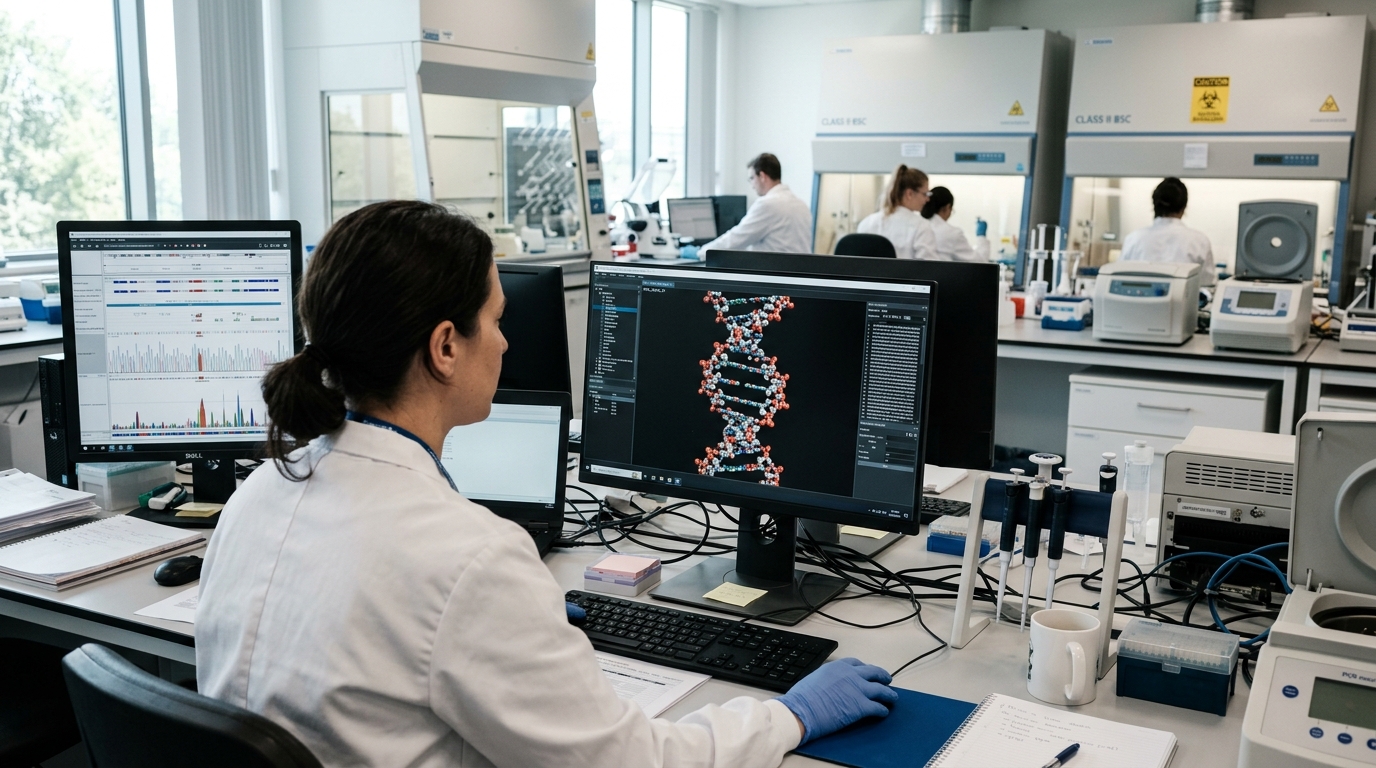

Universal chat-bots and specialized models for biology simplify the design of toxins, viruses, and dangerous pathogens. This is confirmed by scientists interviewed by Nature — and it creates one of the most serious dilemmas in the history of AI: the same technology can be used both for defense and for creating biological weapons.

Why AI is dangerous for biosecurity

Researchers have long known that information about how to design pathogens is in principle available in scientific literature. But previously, it required years of preparation and deep biological knowledge to synthesize something based on it. AI radically reduces this barrier to entry: a chat-bot can help design a synthetic virus, and a specialized model can suggest which toxin would be most effective. Researchers are concerned that AI tools turn what was theoretically available into a practical threat.

- Universal chat-bots (ChatGPT, Claude) in some cases do not refuse requests to describe the synthesis of dangerous substances if the question is formulated cleverly enough

- Specialized biological models, trained on vast amounts of scientific data, can accelerate the design of pathogens and calculation of their properties

- AI tools reduce the requirement for expertise: you no longer need to be a professor of virology to start work on a dangerous project

How scientists propose to control this

Scientists interviewed by Nature split into two main camps with different visions of solutions. Some demand restricting access to the AI models and training data themselves: prohibit or strictly regulate specialized tools for protein synthesis, limit their use only to authorized researchers. Others believe this is fundamentally ineffective — the information is already published in scientific journals, and strict bans will only slow legitimate research in drug and vaccine development.

An alternative approach has been called "synthesis control." Instead of regulating the programs themselves, the proposal is to monitor and block the actual ability to synthesize dangerous sequences. Laboratories and synthetic DNA supplier companies can implement systems to check all orders to automatically identify attempts to synthesize known pathogens. This is slower to deploy but potentially more effective in practice.

Yet AI can save lives

Here a third, albeit less prominent voice emerges in the discussion. Some researchers point to a profound paradox of dual use: AI not only creates a threat but can help neutralize it. The same models that will accelerate the design of a dangerous pathogen can accelerate vaccine, antidote, and antitoxin development.

If a potential pathogen can be designed in a few days, then in theory an antidote can also be developed quickly if a powerful AI system is launched for it. Advocates of this approach propose better investing in developing protective AI applications and early warning systems than in strict bans. This creates a philosophical question that remains unanswered: strict restrictions on biological AI will slow both the threat and the defense simultaneously.

Too lenient regulations will allow the threat to emerge faster than it can be neutralized.

What does this mean

Regulating biological AI will have to be done very carefully and in combination — combining several approaches simultaneously. There needs to be a balance between open access for defense and restrictions for security. For now, such a balance does not exist, and the discussion is only gathering momentum.