Axera AX650N: Edge AI chip for local robotics instead of Jetson

Axera has launched the AX650N Edge AI chip for local neural network execution in robotics. It handles YOLO, LLM and VLM directly on the device without the cloud

Axera has released the Edge AI chip AX650N — a specialized processor for local execution of neural networks directly on robotics and IoT devices. This is the first detailed technical breakdown of the chip's architecture with real tests of YOLO, multimodal LLMs, and other popular computer vision models.

What is Axera AX650N

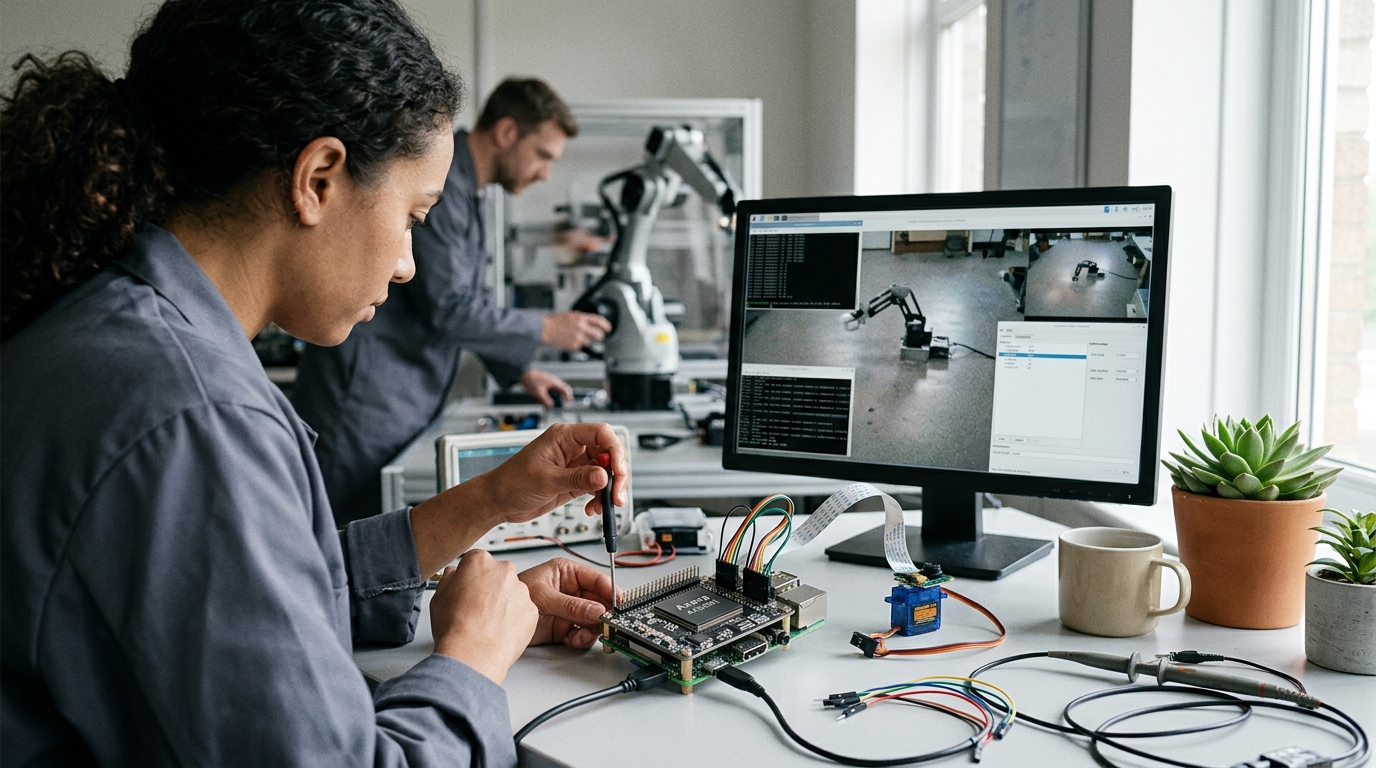

The AX650N is a SoC (System-on-Chip) with an integrated NPU (Neural Processing Unit), meaning the chip is purpose-built for neural network processing. It differs from universal NVIDIA Jetson devices in that it's designed for edge — local model execution directly on the device, without sending data to the cloud. In tests, the chip ran on the Maix4 Hat board from Sipeed: a SoM (System-on-Module) with 8 GB of RAM and a baseboard that connects to a Raspberry Pi 5 via PCIe 2.0. In this configuration, the AX650N acts as an external ML accelerator, similar to the popular Hailo, but integrated into a single monolithic SoC instead of a separate device.

Chip Architecture

Inside the AX650N are specialized cores:

- CPU for system management and traditional computing

- NPU for neural network acceleration (main core, optimized for CNNs, transformers, VLMs)

- DSP for audio processing and real-time signal handling

- ISP for camera operation and image preprocessing

- Two separate DDR4 memory controllers for parallel access

Memory is critical: 8 GB allows keeping a model, image batches, and neural activations in RAM, avoiding slow storage access. The chip was tested on real models — YOLO v8, Depth Anything, SuperPoint, and the multimodal Qwen3. All tasks were executed locally, without sending data to servers.

Why Edge AI Solutions

Robotics, drones, and IoT devices need local computing:

- Response speed — immediate response, without cloud delays

- Privacy — video, audio, and sensor data remain on the device

- Autonomy — works without internet (critical for drones and field robots)

- Economics — no need to pay for cloud API requests

Three classes of solutions exist on the market. First — expensive NVIDIA Jetson with CUDA ($300+). Second — external accelerators like Hailo. Third — built-in NPUs in SoCs (often Chinese: Axera, MediaTek, Snapdragon). The AX650N is the third class. Jetson is more universal but more expensive, requires more power and space. The AX650N is specialized for neural networks, more compact, cheaper, and more energy-efficient.

What This Means

Edge AI is moving from a niche of high-budget industrial projects into the mass market. Previously, the choice was strict: expensive Jetson or cloud with delays. Now affordable chips like the AX650N are emerging, allowing powerful neural networks to run locally. This opens possibilities for startups in agricultural robotics, agricultural drones, industrial automation, and security. Developers can experiment with AI without major infrastructure costs. The second part of the analysis promises detailed benchmarks and comparison with Jetson in terms of performance and power consumption.