Startup Orbital plans to run AI inference from space

Startup Orbital announced plans to build data centers in space with backing from A16z. Satellites with GPU servers will be powered by solar energy to support AI

Startup Orbital Plans to Launch AI Inference from Space

Orbital emerged from stealth mode in April and announced an ambitious plan: to create the first cloud infrastructure for AI inference in space. The company has received investment from Andreessen Horowitz and has already begun development.

Why Space?

On Earth, there is insufficient power capacity for the growing demand of data centers. Networks are overloaded, building new infrastructure is expensive and slow. Orbital founder Euwyn Poon sees the solution in space: there is plenty of solar energy that goes unused. "There's simply not enough power capacity on Earth, and the only way is up. There's plenty of solar energy, but no one is using it," Poon says.

How It Will Work

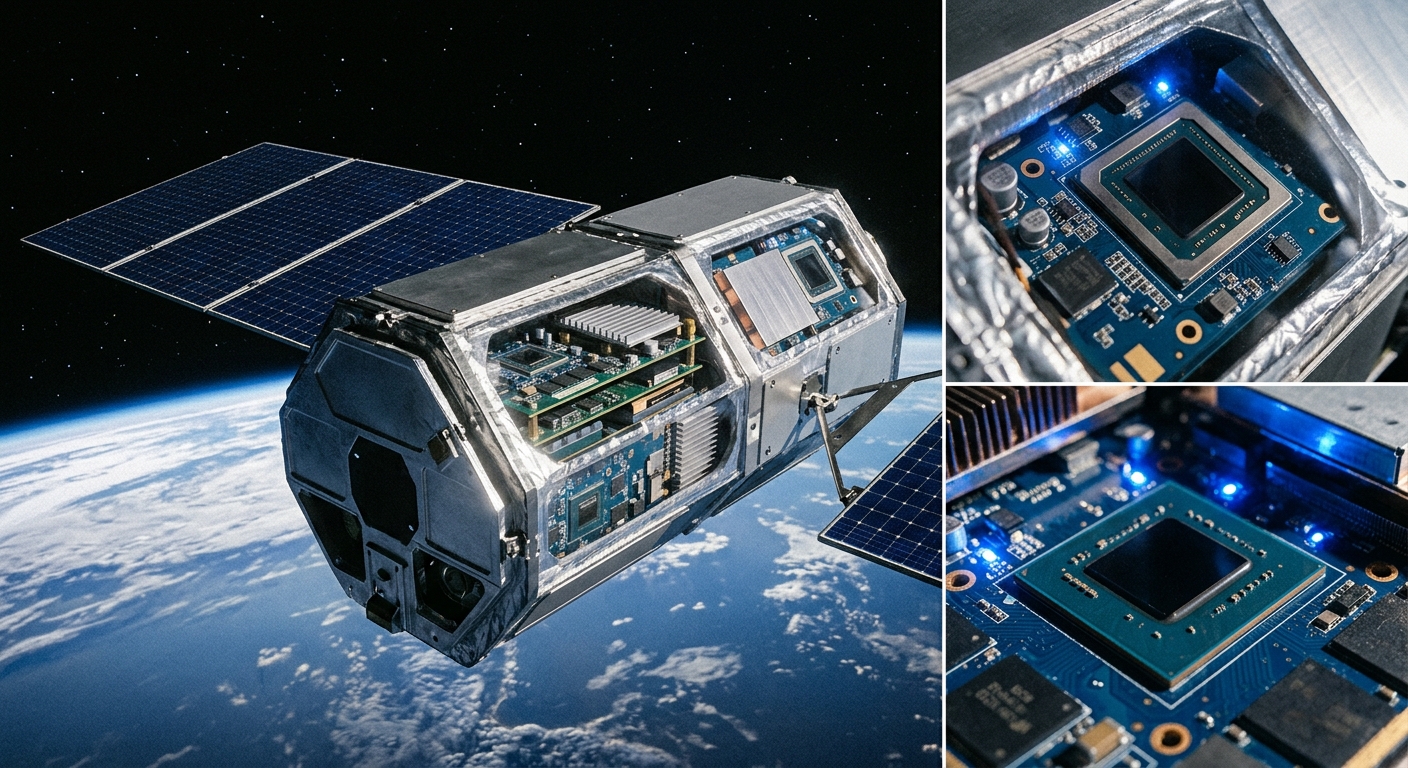

Orbital plans a constellation of satellites in low orbit. Each is essentially a small data center:

- GPU server powered by solar panels the size of a tennis court

- Massive radiation cooling panels (also roughly the size of a tennis court)

- Up to 100 kW of power per satellite

- Optical laser communication channels between satellites

The long-term goal is 10,000 satellites forming a distributed cloud. A request comes from Earth through a ground station to the nearest available satellite, which processes it and sends the response back through the network.

Why Inference Specifically?

Training large models requires powerful GPU clusters tightly linked together. Inference (when a ready-made model responds to requests) is less demanding — a single request can often be processed by one or a few GPUs. This allows the load to be distributed across hundreds of independent satellites, simplifying the design of each. "It's very simple," Poon says. "Engineers will love it." Customers will be OpenAI, Anthropic, and other labs serving billions of requests per day.

Serious Obstacles

Launching data centers in space is not simply scaling Earth experience. There are critical challenges:

- Radiation. Space particles damage GPUs, causing errors. Protection is needed.

- Cooling. On Earth, air carries away heat. In a vacuum, the only way is to radiate heat into space through panels.

- Maintenance. A broken satellite cannot be repaired. Reliability is critical.

- Latency. Data travels back and forth in roughly 30 milliseconds. For chatbots — fine. For stock trading — no.

Dr. Amit Verma from Texas A&M notes: "Deploying thousands of satellites increases failure risk with very limited repair capabilities. It will only pay off for applications that can tolerate delays."

What It Means

If Orbital overcomes the engineering obstacles, this could resolve the data center energy crisis. But the road is long — first prototype in 2027, full production in 2028, real large-scale operations — at least another 10 years. For now, this is a great example of how AI demand is pushing engineers to look to the sky.