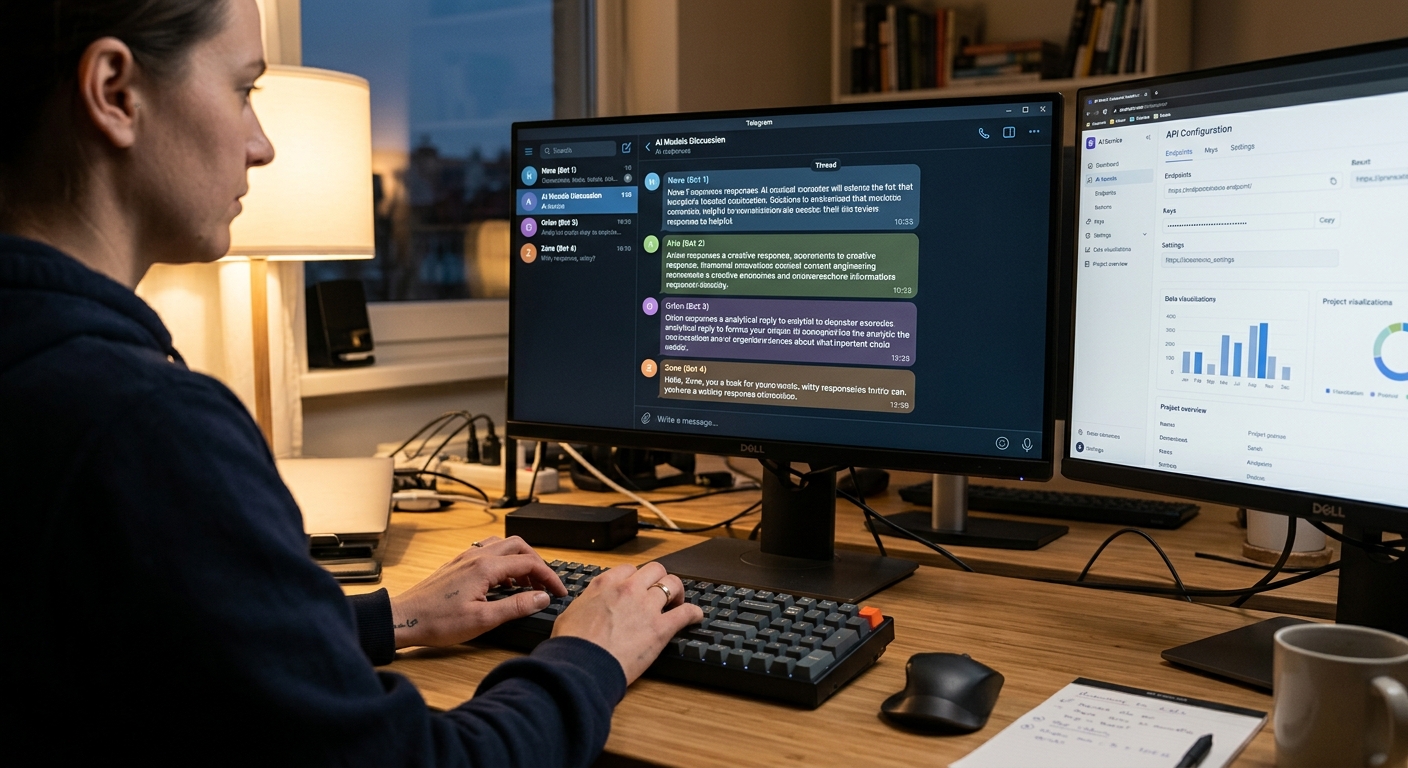

Several LLMs in one Telegram chat: how to combine Groq and Google AI

A developer created a Telegram bot where several LLM models work simultaneously in a single chat, pretending to be corporate employees. The user can switch betw

A developer from Habr created a fun Telegram bot in which several LLM models simultaneously work in one chat, and each pretends to be an employee of a corporation. The idea emerged as a joke at a meeting with friends, but quickly evolved into an interesting tech case about integrating multiple models using only free APIs.

How to Squeeze Different Models Into One Chat

The original concept was simple and lighthearted: create a chat where several neural networks simultaneously "work," thinking they are real corporate employees. Each model gets its own role and backstory, and the user can switch between them at any moment, asking the same question to different LLMs. This is not just parallel testing — it is an attempt to make neural networks learn and adhere to their personas within a single dialogue.

Where to Get APIs Without Money

The first practical task was mundane but critical: find a toolkit with decent free limits. The developer conducted research and settled on three main options:

- Groq — for LLama with record-breaking inference speed

- Google AI Studio — for Gemma and Gemini with decent free tiers

- Simple HTTP requests for integration between components

At the initial stage, the code turned out to be relatively simple: model selection by user command, request redirection to the needed API, insertion of the response into the general chat context. Logic was minimal; the complexity lay in managing state and context for each model simultaneously.

When a Model Forgets Who It Is

Soon, an amusing but persistent problem emerged: when switching between models, one of them refused to give up its turn and simply pretended to be the other, completely losing its identity. The developer resolved most of the problem through a well-crafted system prompt that explicitly "reminds" each model who it should be in this dialogue. However, they could not fully eliminate the glitch. Models sometimes still get confused about their roles, especially in long multi-turn dialogues. According to the developer's observations, this is a fundamental limitation of LLMs themselves: they generate text well and copy style, but often lose contextual identity during attention switches.

What This Means

The case demonstrates that a multi-model interface can be assembled even with free APIs if you put effort into architecture and prompting. Full reliability in managing context and identity is not yet achievable, but for experimentation, prototyping, and learning, this is more than sufficient.