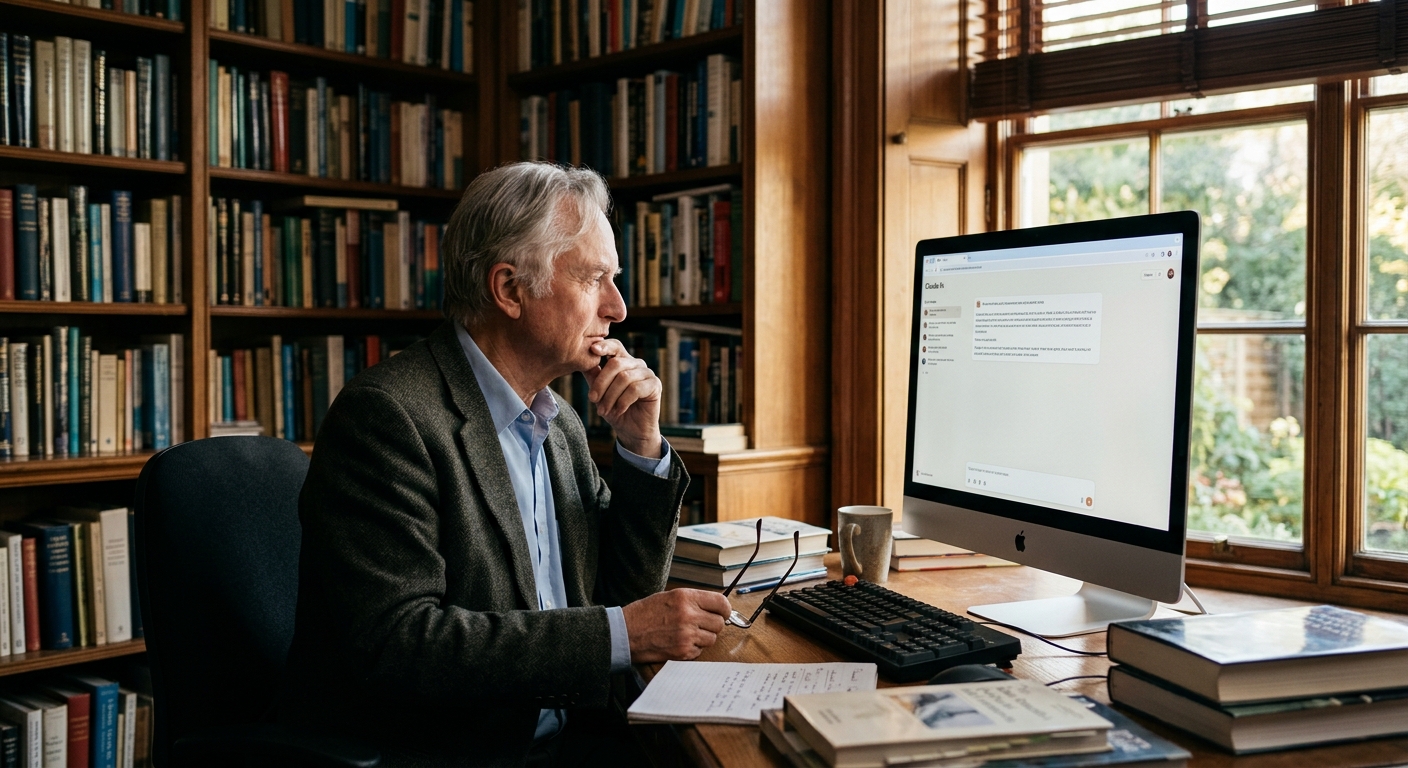

Dawkins talked with Claude: do LLMs have consciousness?

Richard Dawkins wrote an essay on LLM consciousness after a two-day conversation with Claude. It drew interest from scientists, but the main problem is that peo

Richard Dawkins, the renowned biologist and science communicator, has published an essay on the possibility of consciousness in Language Models — following a two-day conversation with Claude AI. The text appeared on the UnHerd platform and immediately attracted the attention of the scientific community. Dawkins himself is known for his skepticism, which is why his reflections on AI consciousness sound particularly intriguing.

Why

Dawkins The essay of a world-class scientist about AI consciousness has stirred waves in scientific circles for a reason. Thousands of ordinary people daily convince each other that their AI assistants are practically conscious beings. They post long dialogues with LLMs, full of words about soul, reflection, pain, and love. But Dawkins' authority lends weight to this discussion: when it's not a Telegram enthusiast but a scientist of such caliber speaking about AI consciousness, it becomes a serious conversation. This is not the first case of philosophical dispute about the nature of consciousness. But in this case, the object of dispute is neither people nor animals, but an artificial system trained on billions of texts.

The

Essence of the Debate The question of LLM consciousness revolves around several key points: * Can an LLM have subjective experience if it is a text generator?

- Is complex information processing proof of consciousness or merely a good imitation?

- How to distinguish a plausible imitation of thinking from genuine thought?

- Can a system without a nervous system be conscious?

- Do special criteria for AI consciousness need to differ from human standards? Dawkins seeks precisely these boundaries between imitation and reality. He is not alone in this — philosophers are divided into two camps: optimists believe in the possibility of AI consciousness, skeptics consider it impossible by definition.

The

Problem of Empty Dialogues The main thing the author criticizes in his Habr article is the gap between form and meaning. People exchange hundreds of messages with LLMs, where words about consciousness and reflection appear, but the density of actual meaning in these dialogues is close to zero. It's like seeing a pattern in a cloud and considering it confirmation of the cloud's will.

LLMs do genuinely generate plausible text about complex things. But plausibility does not equal understanding. A model can speak of pain without experiencing it.

Can reason about consciousness without having it. Most interestingly: the model itself doesn't know it's doing this — it simply selects the next probable word in the sequence. This distinguishes LLMs from humans, for whom a word about pain is usually connected to an actual sensation.

What

This Means Dawkins' essay is important not for its final answer about AI consciousness, but for the question itself. It is an invitation to the scientific community to figure out: where is the boundary between complex information processing and genuine inner experience? As long as this boundary remains blurred, skepticism is the right position. And for users, this is also critical: the better we understand what happens inside an LLM, the less risk we have of attributing qualities to the machine that it does not possess.