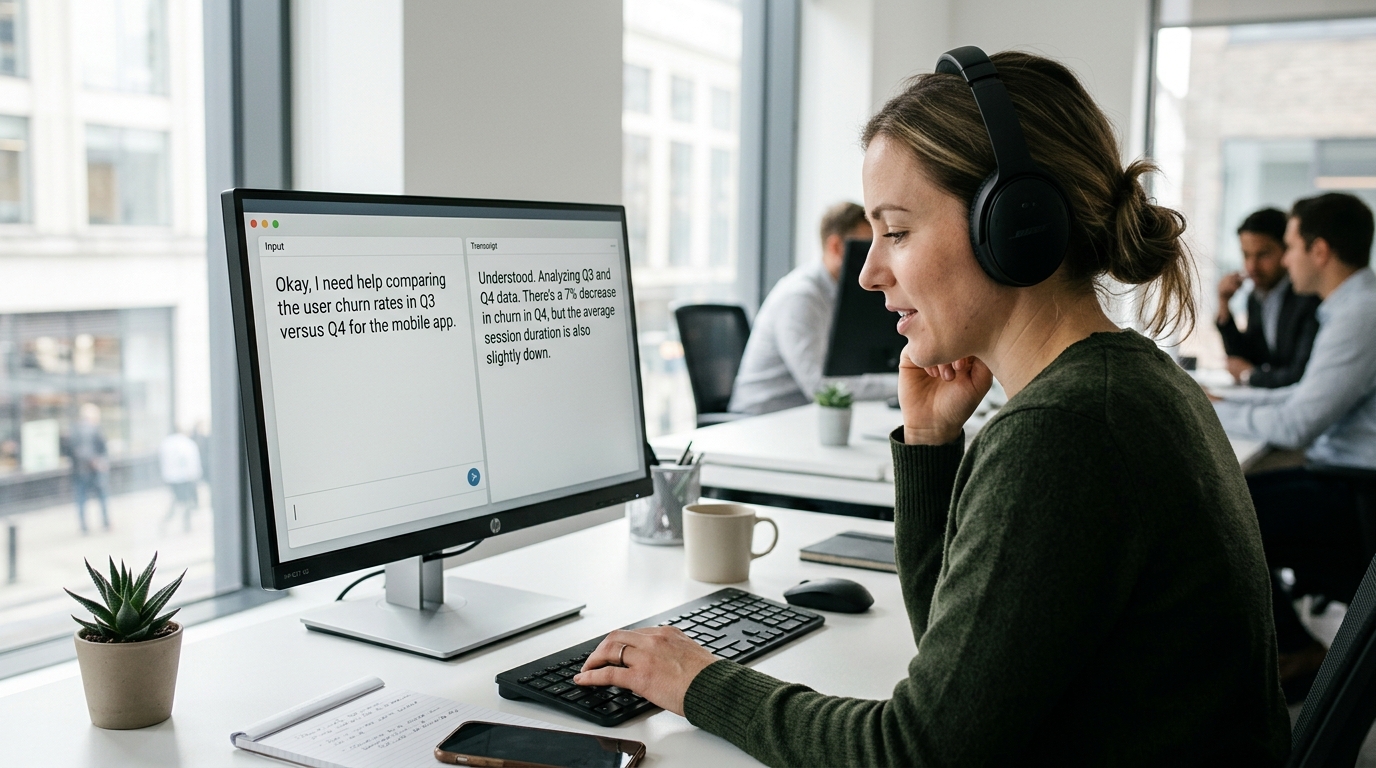

Thinking Machines is building AI that speaks and listens at the same time

Thinking Machines is working on AI that listens and responds at the same time, like in a phone conversation. Conventional models operate sequentially: they firs

Right now, every AI model works on one principle: you write, the model listens. You wait, the model responds. Thinking Machines is trying to change this by creating an architecture that processes your message and generates a response simultaneously — like a regular phone conversation.

The Problem with the Current Approach

All modern language models — from ChatGPT to Claude — work on a request-response principle. You send a complete message, the model fully processes it, then outputs a complete response. This creates the feeling that you're talking to a robot, not a person.

In real conversation, it's different. People listen while generating a response. You can interrupt someone, clarify a detail, add context — and they'll react on the fly, without starting from scratch. Nobody waits for the other person to finish a complete speech before completely rethinking their answer.

This creates a natural, organic flow of dialogue. The current AI approach sets a rigid boundary: input complete → processing → output complete. There's no flexibility, no adaptation during the process, no feeling of two-way communication.

What Thinking Machines Does

The startup is developing a model that processes the input stream in real time and simultaneously generates an output stream. Instead of waiting for the full input, the system starts responding while receiving information from the user. This opens up several fundamentally new possibilities:

- Listening while responding — reacting to new data without reloading context

- Natural interruptions — interrupting, like in a live dialogue between people

- Intonation adaptation — changing tone in response to voice signals in real time

- Non-verbal signals — accounting for gestures and facial expressions in video conversations

- Minimal latency — no dead pauses between exchanges

For voice assistants, this is critical. When you call a call center or order a taxi by voice, you don't want to wait 3–5 seconds for processing. You speak — the assistant hears and immediately responds, like a person.

The Architectural Complexity of the Problem

Simultaneous input processing and output generation is a deep architectural overhaul. Transformers, which almost all modern LLMs are built on, are designed for sequential operation: read the entire context, generate tokens one by one. Changing this fundamental principle means rewriting the mechanics of attention, caching, prediction.

You need to maintain a growing context from the input stream while simultaneously generating output, without losing coherence and logic of the response. Practical challenges are no less serious: response quality (don't they become hasty and incomplete?), latency (minimum latency is needed for naturalness), memory management for growing streams. How do you keep the thread of conversation if the response is running parallel to the input? How do you not miss a detail at the end of a message if you've already started responding to the beginning?

What This Means

If this approach succeeds, dialogue with AI will stop feeling like interaction with a system. It will be a dialogue — a real conversation, without the feeling of rigidity and delay, closer to human communication.

For voice assistants, chatbots, and especially call centers, this is a critical improvement. A customer called — the assistant immediately hears and responds, can interrupt to clarify, adapt the response based on new information. This will increase satisfaction and problem-solving speed many times over.